What Is Anomaly In Database

Posted : admin On 11.08.2019About Anomaly DetectionThe goal of anomaly detection is to recognize situations that are usually uncommon within information that is seemingly homogeneous. Anomaly recognition is definitely an essential device for detecting fraud, network invasion, and some other rare activities that may have got great significance but are difficult to discover.Anomaly detection can become utilized to solve difficulties like the following:A rules enforcement company compiles data about unlawful activities, but nothing at all about legitimate actions.

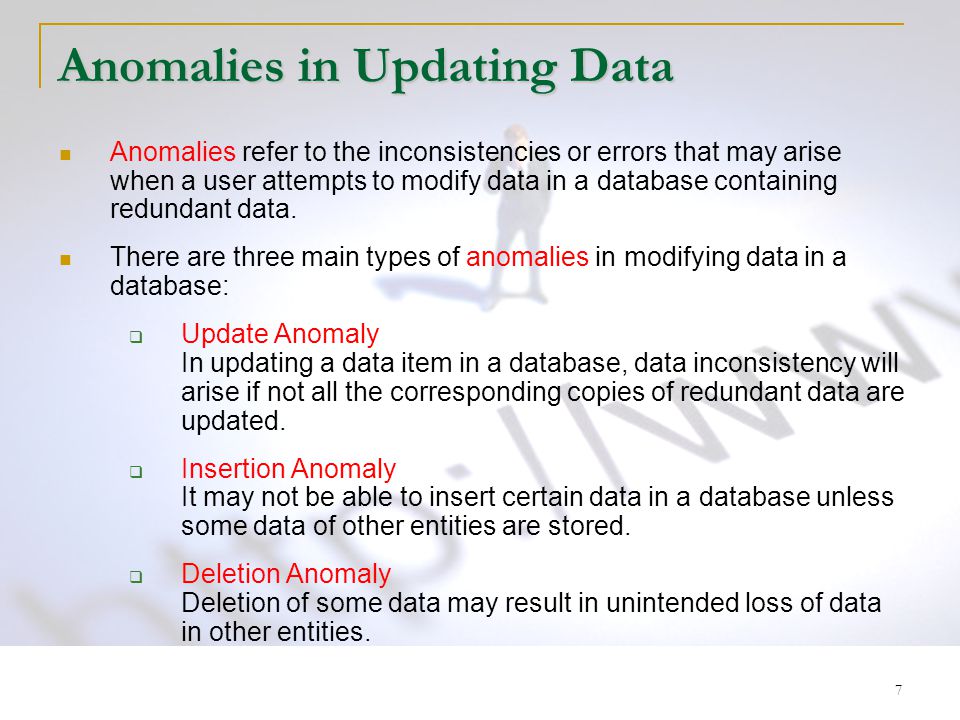

Anomalies are problems that arise in the data due to a flaw in the database design. There are three types of anomalies.Insertion Anomalies:Insertion anomalies occur when we try to insert data into a flawed table.Deletion Anomalies:- Deletion anomalies occur when we delete data from a flawed schema.Update Anomalies:. Insert Anomalies An Insert Anomaly occurs when certain attributes cannot be inserted into the database without the presence of other attributes. For example this is the converse of delete anomaly - we can't add a new course unless we have at least one student enrolled on the course. In Software testing, Anomaly refers to a result that is different from the expected one. This behaviour can result from a document or also from a testers notion and experiences. An Anomaly can also refer to a usability problem as the testware may behave as per the specification, but it can still improve on usability.

How can suspect activity be flagged?The laws enforcement information can be all of one class. There are usually no counter-examples.An insurance policy agency processes a huge number of insurance policy claims, understanding that a really small quantity are fraudulent. How can the fraudulent claims be determined?The claims data includes very several counter-examples. They are usually outliers.

What Is Anomaly In Database Mean

One-Class ClassificationAnomaly recognition is certainly a type of category. Notice 'About Category' on web page 5-1 for an review of the classification mining functionality.Anomaly recognition is implemented as one-class category, because just one course is represented in the training information.

An anomaly detection model forecasts whether a data point is usually regular for a given submission or not really. An atypical information point can become either an outlier or an example of a earlier unseen class.Normally, a classification model must end up being trained on information that includes both illustrations and counter-exampIes for each class so that the model can understand to differentiate between them. For illustration, a model that predicts part effects of a medication should be educated on data that includes a broad variety of replies to the medicine.A one-class classifier grows a profile that usually explains a regular situation in the training information.

Change from the profile is discovered as an anomaIy. One-class cIassifiers are sometimes known to as good security versions, because they look for to determine 'great' behaviours and assume that all additional behaviors are bad. Note:Resolving a one-class classification issue can end up being hard. The precision of one-cIass classifiers cannot generally match up the accuracy of standard classifiers constructed with meaningful counterexamples.The objective of anomaly recognition is to provide some useful information where no information was formerly attainable.

Nevertheless, if there are usually good enough of the 'rare' instances so that stratified sampling could create a training set with sufficient counterexamples for a standard classification model, after that that would generally become a much better solution. Anomaly Detection for Single-CIass DataIn single-cIass information, all the cases possess the same classification. Counter-examples, situations of another course, may be tough to stipulate or expensive to collect. For instance, in text document classification, it may end up being easy to classify a document under a provided topic. However, the world of docs outside of this topic may become extremely large and different. Therefore it may not really be feasible to specify other forms of documents as counter-examples.Anomaly recognition could become utilized to discover unusual instances of a particular kind of document.

Anomaly Detection for Obtaining OutliersOutliers are usually cases that are uncommon because they drop outside the distribution that is certainly considered normal for the data. For illustration, census data might display a median household revenue of $70,000 and a mean to say household income of $80,000, but one or two households might have an earnings of $200,000.

These cases would possibly be recognized as outliers.The distance from the middle of a regular distribution signifies how standard a provided point is with respect to the submission of the information. Each case can become ranked regarding to the probability that it will be either usual or atypical.The presence of outliers can possess a deleterious effect on several types of data mining.

Anomaly recognition can end up being utilized to recognize outliers before mining the information. Illustration: Rating New DataSuppose that you possess a fresh client, and you desire to assess how carefully he resembles a standard consumer in your present consumer database.You can make use of the anomaly recognition design to score the fresh customer data. The fresh customer is definitely a 40-year-old masculine executive who offers a bachelors education and uses an affinity cards. This illustration utilizes the SQL functionality PREDICTIONPROBABILITY to apply the design svmoshclassample (the small sample anomaly recognition model supplied with the Oracle Data Mining test programs).

The function results.05, suggesting a 5% probability that the brand-new customer will be normal. This means that 95%, of your customers are more like your average consumer than he will be.

The brand-new customer will be considerably of an anomaly.Line probtypical FORMAT 9.99SELECT PREDICTIONPROBABILITY ( svmoshclassample, 1 Making use of'M' AS custgénder,'Bach.' AS training,'Exec.'

AS occupation,40 AS age,'1' AS affinitycard) probtypicalFROM DUAL;PROBTYPICAL-.05.

Normalization is usually the procedure of arranging a database to reduce redundancy and enhance.Normalization also simplifies the database style so that it achieves the optimum structure made up of atomic components (i actually.e. Elements that cannot end up being damaged down into smaller components).Furthermore known to as databasé normalization or information normalization, normalization can be an essential part of relational database design, as it assists with the velocity, precision, and performance of the databasé.By normalizing á database, you organize the information into. You assure that each desk contains only related information. If data is not directly associated, you develop a brand-new desk for that data.For instance, if you have got a “Customers” table, you'd usually generate a individual desk for the products they can purchase (you could call this desk “Products”). You'd produce another table for clients' orders (probably known as “Orders”). And if each purchase could consist of multiple products, you'd typically create however another desk to shop each order product (probably known as “OrderItems”).

All these tables would end up being connected by their, which allows you to discover related data across all these tables (like as all purchases by a given customer). Advantages of NormalizationThere are many advantages of normalizing a database.

Here are some of the essential benefits:. Minimizes data redundancy (copy data). Minimizes null values. Results in a even more small database (expected to less data redundancy/null values).

Minimizes/avoids information modification problems. Simplifies questions. The database structure can be cleaner and much easier to know. You can find out a great deal about a relational database just by searching at its. You can lengthen the database without always affecting the existing data. Searching, selecting, and generating indexes can end up being faster, since desks are usually narrower, and even more rows match on a information page.Instance of a Normalized DatabaseWhen developing a relational database, one generally normalizes the data before they generate a schema. Thé database schema decides the company and the framework of the database - basically how the information will be stored.Right here's an example of a normaIized database schéma.

A basic schema diagram of a normalized database.This schema isolates the information into three different. Each table is very specific in the information that it stores - there's one desk for collections, one for performers, and another that retains information that'beds particular to genre. Example of a survey in Microsoft Entry.

The report shows a list of albums, grouped by designer.In both cases, the consumer can watch the information precisely as preferred, regardless of its normalized structure within the database.Another advantage of this technique is definitely that it's feasible to make modifications to the fundamental data construction without impacting the users' watch of that data. Levels of NormalizationThere are usually various amounts of normalization, each one building on the previous level.

The most basic degree of normalization is certainly first regular form (1NY), followed by 2nd normal type (2NY).Many of today's transactional databases are normalized in 3rd normal type (3NF).For a database to fulfill a given degree, it must satisfy the guidelines of all lower ranges, as properly as the principle/s for the given level. For example, to end up being in 3NY, a database must conform to 1NY, 2NY, as properly as 3NN.The amounts of normalization are usually listed beneath, in purchase of strength (with UNF being the weakest): UNF (Unnormalized Form) A database is in UNF if it has not become normalized at all. 1NY (First Normal Type) A relationship (desk) is certainly in 1NY if (and only if) the website of each attribute contains just atomic (indivisible) ideals, and the worth of each feature contains just a one worth from that domain. 2NY (2nd Normal Form) A relationship is usually in 2NY if it will be in 1NN and every non-prime attribute of the relation is reliant on the entire of every candidate key.

Game Endings Normal Ending. This ending will happen if the player didn't save-scum to spend time with all of the girls during Act 1. It's normally the first ending players go through while playing Doki Doki Literature Club! How do you get the best ending where everyone lives and we are all happy. Make your own Doki Doki visual novel? Oct 11, 2017 @ 11:44am. You have to hack reality to get closure - and even that is just closure, not real happy ending. Last edited by Mader; Oct 11, 2017 @ 12:27pm #11. Shioame Oct 11, 2017. The free virtual novel Doki Doki Literature Club isn't what it appears. If you're as baffled as we are, here's how to get the best possible ending. Doki doki happy ending.

3NY (3rd Normal Type) A connection is certainly in 3NN if it is usually in 2NF and every non-prime feature of the relationship is non-transitively dependent on every key of the connection. EKNF (Elementary Essential Normal Type) EKNF is definitely a refined enhancement on 3NN. A connection is usually in EKNF, if and only if, all its elementary useful dependencies start at entire tips or finish at elementary key qualities.

What Are Anomalies

BCNF (Boyce-Codd Normal Form) BCNF is usually a refined improvement on 3NY. A connection will be in Boyce-Codd regular form if and only if for évery one óf its dependencies A → Con, at minimum one of the following problems hold:. X → Con is a insignificant functional reliance (Y ⊆ Times). A is definitely a superkey for schema Ur4NF (Fourth Normal Form) A relation is usually in 4NF if and only if for évery one óf its non-triviaI multivalued dependencies A ↠ Con, X is definitely a superkey-that is certainly, X will be possibly a candidate essential or a supérset thereof. ETNF (Necessary Tuple Regular Type) A relation schema is definitely in ETNF if and just if it is definitely in Boyce-Codd normal form and some element of every clearly declared join reliance of the schema is definitely a superkey. 5NY (Junior high Normal Form) A connection is in 5NY if and only if every non-trivial join dependency in that desk is intended by the candidate secrets. 6NN (Sixth Normal Form) A relationship is certainly in 6NY if and just if every join addiction of the relation is unimportant - where a sign up for dependency can be insignificant if and only if one of its parts is equal to the important heading in its whole.

DKNF (Domain-Key Regular Form) A connection is usually in DKNF when every constraint on the relationship is certainly a reasonable effect of the definition of tips and domain names, and enforcing essential and site vices and circumstances causes all restrictions to end up being met. Hence, it eliminates all non-temporal flaws.3NY is solid more than enough to fulfill most programs, and the higher levels are usually rarely utilized, except in specific conditions where the information (and its use) requires it.

Normalizing án Existing DatabaseNormalization cán furthermore be applied to existing databases that may not really have been recently normalized sufficiently, although this could obtain quite complicated, depending on how the current database is usually created, and how intensely and regularly it's utilized.You may also need to alter the method the normalization had been carried out by denormalizing it first, after that normalizing it to the form that you require. When to NormaIize the DataNormalization is definitely particularly essential for systems, where inserts, up-dates, and deletes are usually occurring quickly and are typically initiated by the end users.On the various other hands, normalization is certainly not continually considered essential for and techniques, where information is often denormalized in order to enhance the performance of the queries that require to be carried out in that circumstance. When to DenormaIize the DataThere are usually some situations where you might be much better off denormalizing a database.Many information warehouses and OLAP programs use denormalized directories.

The main reason for this is definitely performance.